The Best Image to Image for Brand Consistency in 2026

When teams talk about visual AI, they often focus on novelty first. They want to know whether a tool can generate a striking image, imitate a style, or produce a dramatic before-and-after result. But in real commercial work, the harder problem is rarely imagination alone. It is consistency. A campaign needs product images that still feel like the same brand. A content series needs visuals that vary without losing identity. That is why Image to Image stands out less as a gimmick and more as a practical system for visual continuity in 2026.

The strongest value of image-based transformation is that it starts from something already useful. Instead of rebuilding a visual language from scratch every time, it lets creators extend an existing one. In my view, that changes the role of AI from an unpredictable generator into a more usable production assistant. It does not remove taste, direction, or review. What it does is reduce the amount of randomness between one asset and the next

Why Brand Work Needs Controlled Variation

Modern brand content is not built around a single finished image anymore. A visual may begin as a product shot, then become a landing page hero, a paid ad variation, a seasonal version, and later a social media asset. Each piece needs enough flexibility to fit its context, but enough stability to remain recognizable.

Consistency Is A Creative Requirement Now

For many teams, inconsistency is more damaging than a lack of originality. A campaign can survive modest visuals. It struggles when every asset seems to come from a different visual system.

This is where image-to-image workflows become especially useful. Starting from a source image means composition, subject logic, and visual hierarchy are already present. The AI is not inventing identity from zero. It is carrying identity forward into a new form.

Variation Without Drift Is Hard To Do Manually

Traditional editing workflows can absolutely achieve consistency, but they take time. Every variation requires attention to lighting, framing, styling, and detail preservation. As the number of outputs increases, so does the risk of drift.

In my observation, image-to-image systems reduce that pressure by treating the original image as a visual anchor. The result is not total control, but it is usually more stable than asking a model to recreate the same brand feeling from a text prompt alone.

How The Workflow Matches Real Production Needs

The official Image to Image AI process is simple, which is part of its strength. It does not ask users to learn a complicated production stack before getting value from it.

Step 1: Upload A Source Or Reference Image

Everything begins with a visual base. That can be a product photo, campaign draft, character render, or previous approved asset.

For brand work, this matters because the starting image already contains important signals. It may carry approved composition, lighting direction, material texture, or packaging detail. Rather than replacing those decisions, the system builds on them.

Step 2: Describe The Intended Transformation

The prompt is used to define what should change. That may be mood, style, environment, detail level, or presentation context.

This is a subtle but important difference from text-only generation. You are not describing an entire visual world. You are describing the gap between what exists and what you need next.

Step 3: Select The Model That Fits The Job

The platform presents multiple models rather than one generic solution. That reflects a realistic view of production. Fast exploration, precise editing, and continuity-focused transformation are not the same task.

For teams, this is useful because model choice becomes part of workflow design rather than an afterthought.

Which Models Matter Most For Brand Consistency

A full model catalog is not necessary to understand the product, but a few models are especially relevant when the goal is controlled output rather than surprise.

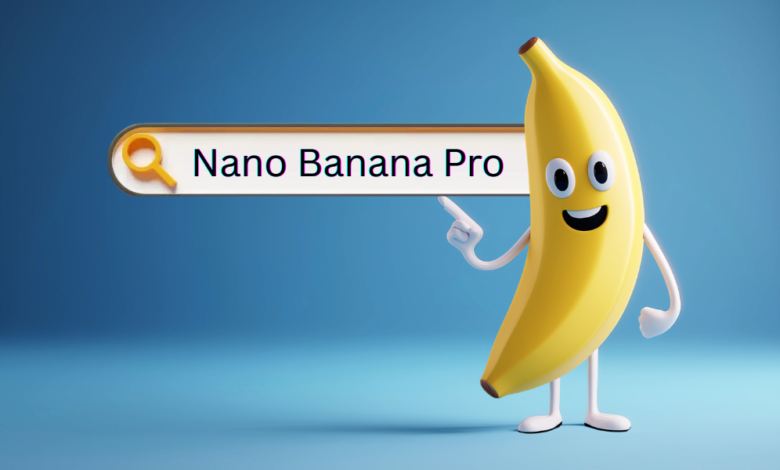

Nano Banana Supports Stable Visual Identity

Nano Banana is the most obvious fit for continuity-focused work. It is positioned around image-to-image transformation, reference image support, and character or style consistency.

That makes it useful for situations such as:

- Adapting one product image into several campaign looks

- Preserving the feel of a spokesperson or character across assets

- Shifting style without losing composition

- Building image sets that still feel like part of one system

Why Reference Support Changes The Quality Of Output

Support for multiple reference images is more important than it first appears. Brand identity is rarely contained in a single image. It usually lives across colors, mood, product details, styling choices, and recurring visual patterns.

Being able to guide the model with more than one reference helps translate those patterns into output. In practice, this often means less prompt stress and better continuity.

Flux Helps When The Asset Is Mostly Right

Brand work often involves small, important corrections rather than dramatic rewrites. A product label may need adjustment. A background element may need removal. Text inside an image may need replacement.

Flux appears designed for that kind of task. It allows targeted editing while preserving the rest of the image context. That is often far more useful for professional teams than regenerating the entire image and hoping nothing important changes.

Precision Matters More Than Spectacle

A context-aware editing model may not create the most dramatic demo image, but it solves a real production problem. In commercial workflows, being able to fix one specific thing without breaking five others is extremely valuable.

Why This Matters Across Different Teams

The platform’s usefulness becomes clearer when you look at how different teams actually work.

E-Commerce Teams Need Repeatable Product Presentation

A single product may need clean studio visuals, lifestyle scenes, seasonal variations, and promotional derivatives. Recreating that manually is expensive. Starting from one approved image and transforming it into controlled variants is a more scalable approach.

Marketing Teams Need Fast Asset Families

Campaign work is rarely about one output. It is about families of outputs that feel coherent across channels. Image-to-image workflows help transform one approved visual into multiple on-brand directions without resetting the whole creative process each time.

Content Teams Need Freshness Without Losing Identity

Brands posting frequently need variation, but not reinvention. Too much change weakens recognition. Too little change creates fatigue. Controlled transformation sits in a useful middle zone.

A Practical Feature Comparison For Teams

| Brand Need | Nano Banana | Flux | General Impact |

| Preserve subject identity | Strong | Strong in edited areas | Helps keep assets recognizable |

| Adapt approved visuals into variants | Strong | Moderate | Reduces rework across campaigns |

| Edit only a small part of an image | Limited | Strong | Useful for corrections and updates |

| Keep style consistent across outputs | Strong | Strong | Supports brand continuity |

| Handle commercial production use | Supported | Supported | Fits business workflows |

The point of this table is not to declare a winner. It is to show that different models support different parts of the same content system.

What Makes This More Than A Trend

A lot of AI tools attract attention because they make creation look easy. But ease is not the same as usefulness. A useful tool fits how work already happens.

Real Teams Work Through Revisions

Creative work in brand environments is rarely linear. Teams review, revise, compare, and adjust. The strongest aspect of image-to-image production is that it supports those revision loops instead of forcing a full restart each time.

Visual Memory Becomes A Workflow Advantage

The source image acts as memory. That may be the most practical distinction between image-to-image and pure prompt generation. The system remembers what matters visually because you show it, not only because you describe it.

Why This Lowers Operational Friction

In my view, the real gain is not just output quality. It is lower friction between versions. Teams spend less time trying to recover what the previous version already got right.

Limits That Deserve Honest Attention

A grounded explanation should mention where the process still depends on human judgment.

The Starting Asset Still Matters

If the source image is weak, the transformation has less to build on. Good results still depend on a useful visual foundation.

Prompt Clarity Still Affects Outcomes

Even with a strong reference image, vague instructions can lead to mixed results. Clear transformation goals usually produce better outputs than broad emotional language.

Several Rounds May Still Be Needed

This is not a magic one-click finishing system. In many cases, the first generation reveals the direction, and later passes improve the final asset.

Why This Feels Especially Relevant In 2026

By 2026, the question is no longer whether AI can generate images. That question has already been answered. The more useful question is whether AI can support repeatable, recognizable, commercially usable visual systems.

That is why this kind of workflow matters. It treats a brand image not as a fixed endpoint, but as a reusable starting point. It allows one approved visual to become many related outputs without losing the logic that made the original effective.

Seen from that angle, the best image-to-image system is not the one that produces the wildest surprise. It is the one that helps teams make more assets while staying visually coherent. And that is exactly why this approach feels increasingly central to serious content production.

READ MORE: A Practical Guide to AI Image Generation Workflows and Model Selection